Dear all,

I hope you’ve had time to recharge over Christmas!

Henry and I are back as 2022 — the ‘coronation year’ for Generative AI — draws to a close. In our conversation, we encounter some of the philosophical questions about GenAI. I am grateful to be able to discuss them in good faith with a thoughtful friend.

I hope you find these discussions useful.

This week we cover:

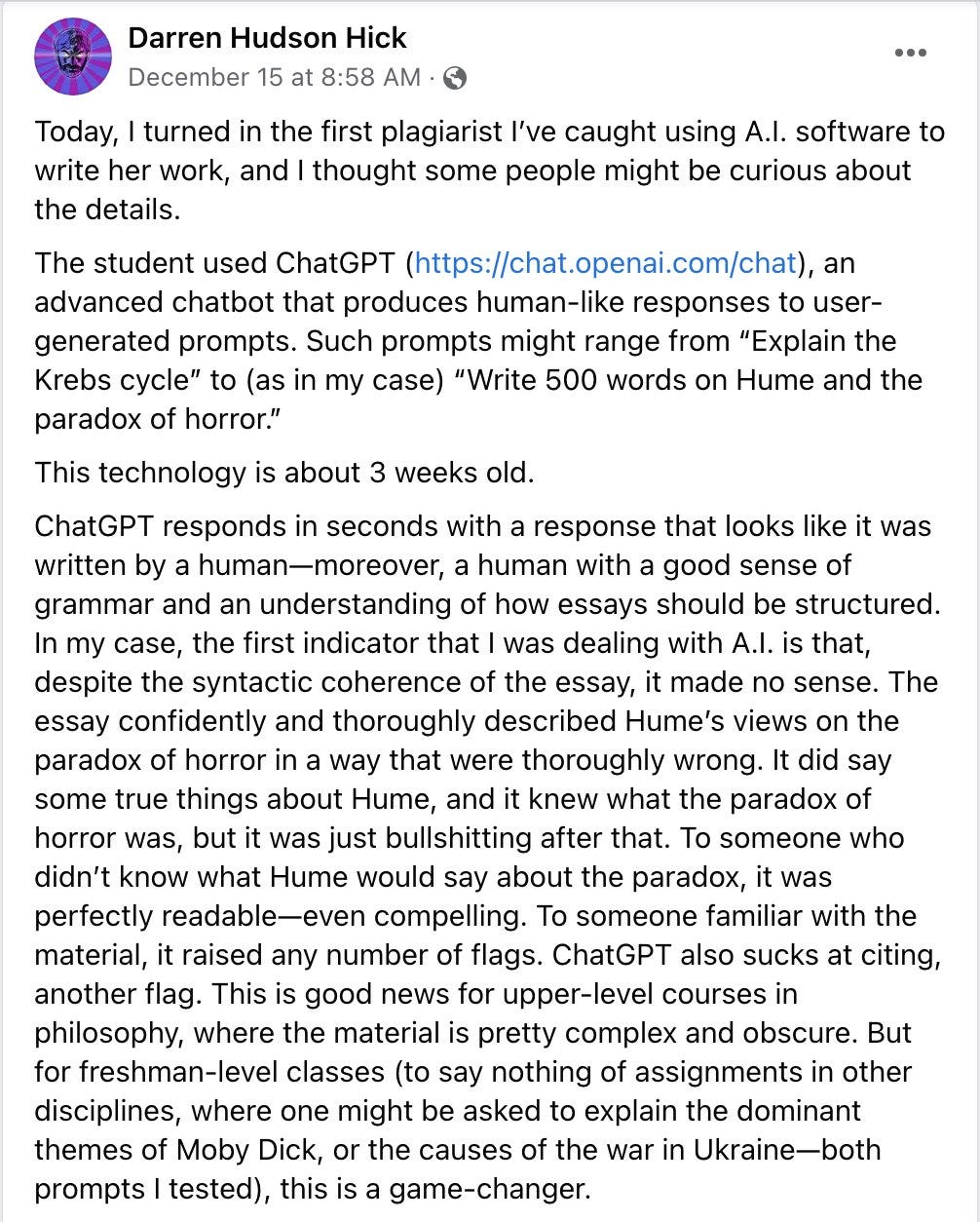

1. Professor catches student using ChatGPT to cheat

University student caught using ChatGPT — will coursework ever be the same?

How will we test for plagiarized content?

What’s the line between using AI as a tool vs cheating?

2. AI-Generated code

A new study finds that AI-generated code leads to security vulnerabilities.

Will AI-generating code systems augment or automate developers?

What does AI-generated code mean for copyright and licensing?

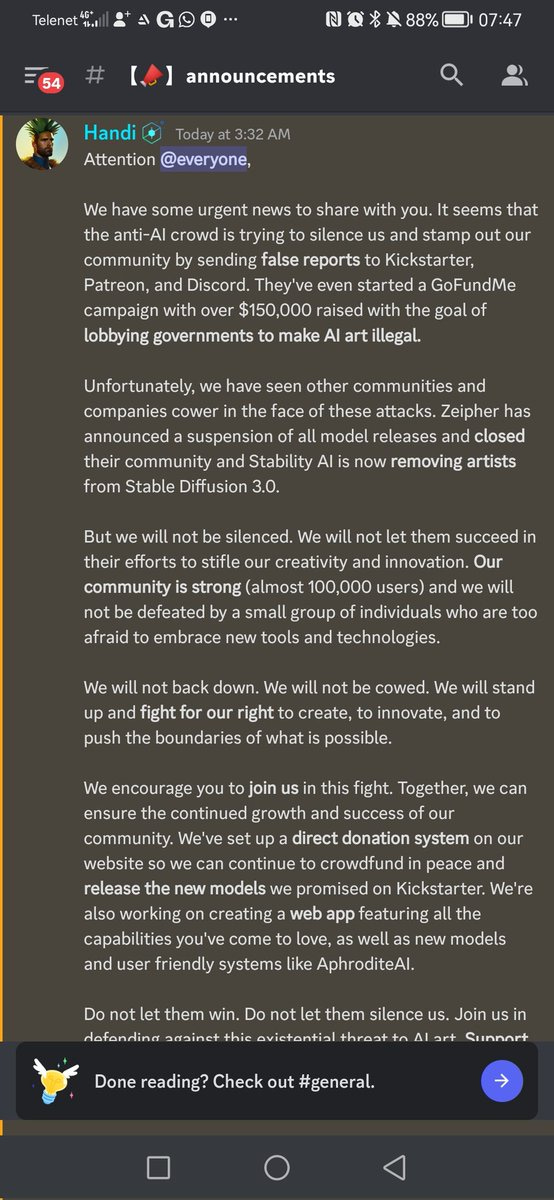

3. Unstable Diffusion kicked off Kickstarter

Can AI-generated porn ever be ethical?

Is AI-generated porn ripping off adult entertainers/illustrators?

Community-sourced training data for initiatives like Unstable Diffusion.

4. Google vs Open AI: Release strategies

Google claims it has not released Chat GPT-like tools as it’s concerned about safety.

Does Google have similar capabilities to Open AI under wraps?

Open v closed AI systems — how should we consider safety and accessibility?

5. Fake Zuck

What is the line between AI-generated satire and harmful content?

How do platforms moderate AI-generated content?

Content-moderation vs censorship.

Breakthrough or Bullshit?

We both agreed that AI-generated text and code are breakthroughs — although some of the hype around them is bullshit.

It’s worth remembering that models like GPT-3 and Codex — Open AI models that generate text and code, respectively — are still in early iteration. Their outputs are incredible, though far from perfect. But they will improve quickly.

I bet we will be shocked at how rudimental they seem from what is possible even 12 months from now.

I can’t wait to see how it all evolves.

But, for now, enjoy the rest of the holidays!

Namaste and goodnight,

Nina

Generative AI's real-world vulnerabilities