ChatGPT Mania: From Meta to Musk – everyone wants some sweet LLM pie

Plus the $10,000 Nvidia chip powering the GenAI revolution

Happy Friday all,

🔥🔥🔥 We called it right? This space is burning hot! Another insane week…

🦙 Just an hour after I published last week’s EGAI newsletter, Meta announced LLaMA. LLaMA is Meta’s OWN large language model. And get this: they're claiming it outperforms Open AI's GPT-3 (which, by the way, is what ChatGPT is based on).

Yann Le Cun, Meta's Head of AI, made the same claim — and I just want to give Yann a little shout-out. Just a few days before ChatGPT reached a whopping 100 million users, he infamously said wasn't ‘that innovative.’ Talk about bad timing. But now that LLaMA's out, he might just have the last laugh.

We even had a bit of a Twitter frisson, with me acknowledging the good-natured shade I threw his way, and him responding with not one, but THREE laughing emojis. This all makes him a rather good sport in my book. Douze points Yann!

Moving swiftly along, though, let’s get to why LLaMA is important in the context of three GenAI trends that I see unfolding.

⚡⚡⚡ Big Tech Pivoting Hard to GenAI

Again, we called it.

All the big tech players are now seriously jumping on the GenAI bandwagon - Microsoft, Google, AWS, and of course, Meta. (I am incredibly curious about what Apple's cooking up… they've been awfully quiet, haven't they?)

My sense is that all of this was a bit unexpected. I don’t think any of the Big Tech companies or anyone, except for me and a few others (yes, I am taking some credit here), really saw how quickly this would unfold.

That’s not to say they weren’t building ‘stuff’ or thinking about it. Clearly, they were – big innovations in LLMs came from Google all the way back in 2017, for example. But even they couldn't have predicted the lightning-fast speed at which these developments would be integrated into existing systems.

I have no doubt that 2023 is the year GenAI impacts billions of people, catalysing a step change in the economy and society. Hence the hard pivot.

This was also the week Meta announced the creation of its new GenAI product group. (Too bad that it spent all those billions pivoting to the Metaverse eh? If only Zuckerberg had waited a little longer.) Even Musk wants in. He is apparently building his own ‘non-woke’ version of ChatGPT.

⬆️⬆️⬆️ Open Source is Levelling UP

Last week’s lead EGAI story was the AWS/Hugging Face collaboration – hugely important because of the way it democratises GenAI for the open-source community. LLaMA is another key development – because, unlike Open AI, Meta has decided to release its model as open source.

LLaMa was trained on publicly available data sets such as Common Crawl (a kind of repository of everything on the net) and Wikipedia. A week after release and its training weights have already been mailed out.

This is a huge departure from what’s been happening so far.

So far, all the Big Guys are keeping their secret sauce, well … secret. And despite the ‘Open’ in its name – Open AI is anything but. All of its GenAI models are closed. We don’t know how they were trained, what data sets were used, or have open access to them.

This is changing — more and more foundational models are being released as open source, meaning that everyone can iterate and build on top of them. (To be clear: I am sure Meta's motivations are not entirely altruistic – and the same goes for AWS. They do this, in part, to ensure that their models, products and services are integrated into the GenAI ecosystem through widespread adoption.)

The philosophical question about how the GenAI revolution should unfold has thus far been presented as a dichotomy. Should a few well-resourced actors control foundational models – or should they be open-sourced so everyone can use them? In the end, it looks like both will be true.

MORE, MORE, MORE – A tidal wave of GenAI incoming

While Open AI is not really ‘open,’ they are making their models more accessible with their new ChatGPT and Whisper APIs.

Developers can now integrate ChatGPT into apps, websites, and products via its API. And the real kicker? It's 90% cheaper to run than it was just a few months ago, thanks to some slick optimizations.

But that's not all – Whisper, Open AI's speech-to-text model, is also getting an API release. With the ability to transcribe speech in 99 languages and provide English translations, Whisper is a powerful and cheap tool. For just a dollar, it can transcribe almost 3 hours of speech.

The GenAI revolution is still in its infancy, but we can expect to see an explosion of products, goods, tools, and services hitting the market in the coming months and years. But hold on to your hats, folks… this is only the tip of the iceberg. I wonder where we will be in 12 months’ time?

🌎 But as we marvel at the power of GenAI more broadly, we must also ask ourselves: what is the environmental cost of all this computation? I’m sure it's a dirty little secret that deserves a closer look. We’ll do that soon.

Now, let’s get to the best of the rest:

OpenAI, BBC and TikTok sign up for GenAI ‘Code of Conduct’

10 companies, including OpenAI, TikTok, and the BBC, have committed to voluntary guidelines on creating and sharing AI-generated content responsibly, to ensure that it is not used to harm or disempower.

The guidelines call for transparency from both the creators of the technology and the distributors of the content, disclosing when people might be interacting with AI-generated content

The Partnership on AI (PAI) drafted the guidelines in consultation with over 50 organizations. The PAI is an AI research nonprofit.

YouTube CEO Teases GenAI Creator Tools

Neal Mohan, YouTube's new head is already teasing YouTube’s upcoming Generative AI creator tools.

Mohan said, “Creators will be able to expand their storytelling and raise their production value, from virtually swapping outfits to creating a fantastical film setting through AI’s generative capabilities.

He says YouTube will develop GenAI with “thoughtful guardrails.”

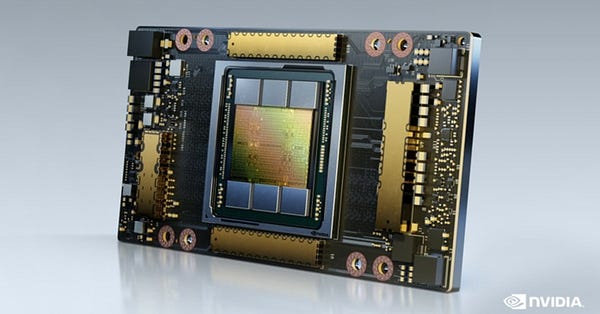

Nvidia’s $10,000 Chip Powers the GenAI Revolution

Nvidia's A100 chip has become one of the most critical tools in the GenAI revolution.

It is ideally suited for ML tasks, as it can perform many simple calculations simultaneously, making it important for training and using neural network models.

Most of the big companies or startups working on AI software require hundreds or thousands of A100 chips. They either purchase them on their own or secure access to the computers from a cloud provider.

Nvidia's AI chip business rose by 11% to more than $3.6 billion in sales during the last quarter.

That’s all for this edition of EGAI. See you next Friday.

But before I go, I wanted to highlight my PIONEER interview with Jay LeBoeuf of Descript — we talk about how GenAI will transform the entire audio domain — affecting everything from sound production to music and creativity

Enjoy your weekend!

Namaste,

Nina