AI on trial: Can everything generated by AI be subject to copyright?

From litigation to content moderation sweatshops - Nina and Henry discuss the week in Generative AI

Happy Monday….

Last week was a bad week (at least headline-wise) for Generative AI — from litigation to content moderation ‘sweatshops' — some of the controversies we’ve anticipated are bubbling to the surface.

The legal challenges are the most significant — because they will be precedent-setting with broad ramifications for the entire AI/ML space. (I will be getting into the weeds of the legal cases with the help of some IP/copyright lawyers soon to break it down further.)

For now, here are Henry and I on the last week’s developments.

Let’s get to it.

Stability AI and training data on trial

Two lawsuits — one class-action suit filed by three artists in the US, and another filed in London by Getty Image — target Stability AI based on copyright infringement and 'data scraping.’ (The former also names Deviant Art and Midjourney.)

The basis for both lawsuits boils down to training data. Both argue that copyrighted images were illegally ‘scraped’ to train models like Stable Diffusion.

Notably, the US lawsuit also argued that all AI-generated output is derivative of input data — meaning that everything generated by Stable Diffusion (and by extension by AI) can be interpreted as a (potential) copyright infringement.

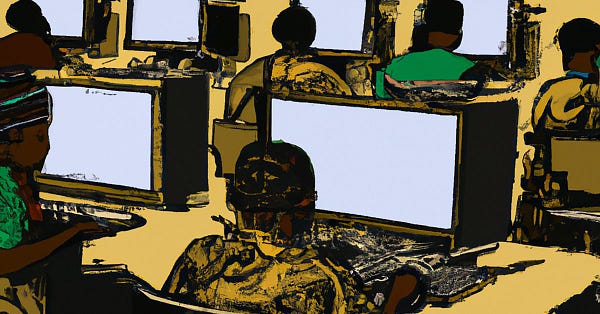

Open AI and tech’s content moderation sweatshop problem

An investigation from TIME’s Billy Perrigo — finds that Open AI outsourced traumatic content moderation work to poorly-paid Kenyan workers.

In order to make Chat GPT less toxic, the work involved reading and labeling text that described situations like murder, child abuse, rape, animal abuse, torture and self harm.

This is not unprecedented — former investigations reveal how Meta has done the same: are these big tech’s content moderation sweat shops?

CNET outsources writing to AI — it ends badly

CNET, the well known tech publication, has been using generative AI to write articles on money and finance.

Predictably, the articles have just been wrong, forcing the staff to issue corrections.

A tale of hubris: tools like ChatGPT are obviously not ready for real-world deployment without human oversight.

King of cool, Nick Cave, slams Chat GPT

Responding to fans generating lyrics in his ‘style,’ Nick Cave lambasted Chat GPT, calling it a ‘grotesque mockery of what it means to be human.’

Love or hate ChatGPT — to me, its culturally and social impact — are an indicator of the great AI debates to come.

Breakthrough or Bullshit?

We both agree that the ligitation against Stability AI is big deal as it will be precedent-setting. I don’t think that the arguments about copyright and synthetic ‘derivatives’ are necessarily sound —but a Court may decide otherwise. We will see.

We also think the CNET story is a ‘breakthrough’ in the sense that it is the first of many more to come. The demand for content will be so huge that organisations will inevitably use AI tools to produce content - even when the outputs are spammy or simply incorrect.

Watch this space and enjoy the rest of the week…

Nina